The perception of ChatGPT is a lot different from reality. What it can do is considerably overhyped compared to what it does - predict the next word it should give to the user. The folks who invented ChatGPT believe that if they keep scaling it, i.e. train it with more datasets, the accuracy of its predictions will improve. But even though ChatGPT may get to a point where it produces fewer errors, it may never reach 100% accuracy without any breakthrough innovation. And enterprises and businesses might hesitate to blindly adopt a tool that doesn’t give reliable outcomes to their customers.

So what is the point of making an ambitious technology like ChatGPT? Is it an artificial general intelligence (AGI) system or a weapon of mass destruction? Let’s break it down.

The Good

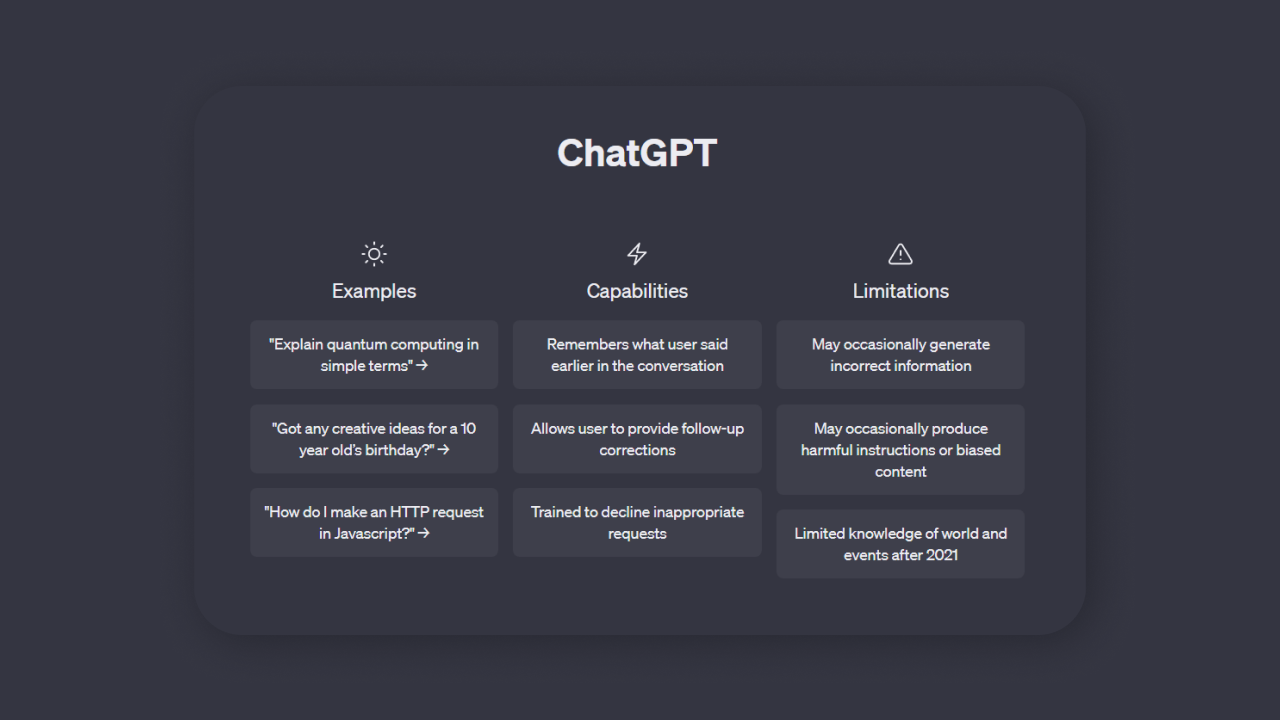

ChatGPT is most definitely in a Hype Cycle, but one cannot deny its ability to interpret and understand a user question in natural language and provide results. So it will have a wide range of use cases which will be useful and meaningful.

For instance, Microsoft’s Copilot 365 (built using GPT-4) enables users to create basic presentations and documents with just a few instructions, making it a good productivity tool that gives users a headstart in their projects. It is also very effectively used in Microsoft Teams (and Zoom) to extract details of virtual meetings by participants who could not attend or attendees looking for a summary/minutes. All they have to do is prompt the system with specifics related to them discussed during the meeting.

ChatGPT is also a great idea-generation tool for brainstorming ideas and approaches. Professionals like programmers and writers who need to write code and content consistently can become a lot more productive with ChatGPT doing the bulk of their grunt work and them verifying and editing the output for 100% accuracy. It also means a lot less dependency on junior hires to scale up work in specific areas. This depends on how the tool is used for querying. Users can extract better outcomes from GPT systems with methods like Prompt Engineering, which helps write prompts creatively to lock in the user’s thoughts while querying ChatGPT and derives relatively more relevant output from the tool.

ChatGPT boosts the capability of the creator by providing the initial steps and drafts required in any creation process. It also helps that ChatGPT does not suffer from writer’s block or imposter syndrome and easily conquers the blank page challenge, most creators experience.

Industry experts feel that ChatGPT could be a great tool for Productivity Enhancement due to its innate ability to help users discover new things and get things done faster. And companies that identify high-quality use cases to plug ChatGPT into their product can leverage its natural language capabilities to provide a better customer experience.

The Bad

ChatGPT has its merits, but one cannot stress enough that it has been overhyped to get user traction and instigate the browser wars.

Microsoft has made a significant investment (10 billion USD) in OpenAI. And up until now, it has been badly losing to Google in the internet search race. Google dominates online search and earns big money through ads run on webpages. Microsoft stands to lose nothing by integrating ChatGPT in its search engine (Bing Chat). ChatGPT gives it a fighting chance to get some traction away from Google and convert those search results through ads in the chat widgets. Google, on its part, is playing catchup with its very own conversational AI tool, Bard.

Companies are also creating ChatGPT plugins that solve the problem of performing actions in a destination system. But ChatGPT provides the third-party system its own interpretation of what the user has queried in natural language, which may not necessarily be accurate and most likely taint the outcome.

The Ugly

Microsoft and OpenAI are facing lawsuits for using code and content from credible sources without permission to train ChatGPT’s Large Language Models (LLMs), which is a massive breach of intellectual property.

Stack Overflow has banned the use of ChatGPT to answer questions posted on its platform.

People are worried that ChatGPT will take their jobs, but that worry has no base in reality. Realistically speaking, we should worry about how a technology such as this or its instance can be used in a negative context. Now that it has been proven that these LLMs can be built, well-funded individuals or entities could carry out cyber security attacks by creating their own language models without security guard rails to inflict harm to potential or targeted users.

ChatGPT’s Natural Language Processing (NLP) capabilities are by far the best the world has seen. What is left to be seen is its relevant and purposeful application in a truly game-changing way.

This article was originally published in CXOToday.